What Is Apple Intelligence in iOS 27?

Apple Intelligence in iOS 27 is not a simple feature update. It represents a structural shift in how Siri processes, remembers, and acts on information across Apple’s entire device ecosystem.

The centerpiece is an internal project Apple calls “Campo,” first surfaced ahead of WWDC 2026 (June 8-12). Campo reframes Siri from a reactive voice assistant into what Apple describes as an autonomous agent capable of executing multi-step tasks across third-party apps without constant user prompting.

WWDC 2026: The “Intelligence Reborn” Keynote

Apple’s WWDC 2026 keynote carried the theme “Intelligence Reborn,” a deliberate signal that the company views this release as a categorical departure from previous Siri iterations.

The event ran June 8-12, 2026, with the keynote anchoring the Campo architecture announcement alongside SiriKit 4.0 and Private Cloud Compute 2.0. These three components are designed to work as a single system, not as isolated features.

The Campo Architecture: Three Core Pillars

Persistent Conversational History

Siri in iOS 27 maintains a localized vector database of user interactions spanning months. This is stored on-device, meaning Apple’s servers do not hold the raw conversational record.

The practical result: Siri can reference a task you discussed three weeks ago without you re-explaining context. This is a meaningful departure from the stateless, session-by-session model that defined Siri for over a decade.

Multimodal Action Engine

The Action Engine allows Siri to interact directly with third-party apps via standardized API hooks, without requiring the user to manually navigate between applications.

This goes beyond reading screen content. Siri can execute actions inside apps, draft content, submit forms, and chain tasks across multiple services in a single instruction. Developer access opens through SiriKit 4.0, which Apple is making available to all App Store developers.

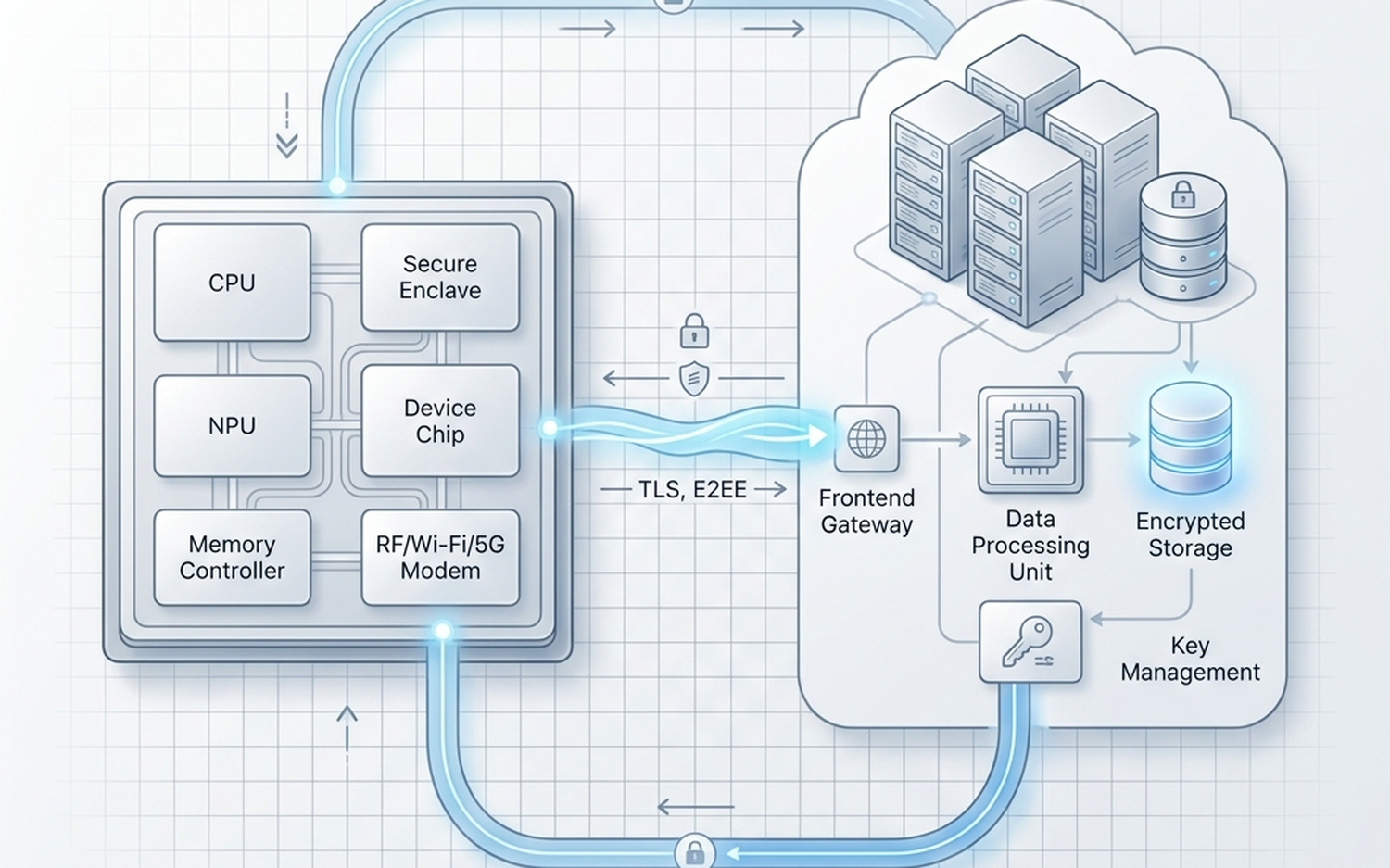

Hybrid On-Device and Cloud Processing

Apple’s A20 chip, built on TSMC’s N3P process, handles approximately 70% of Siri requests entirely on-device, according to Apple’s official technical documentation shared at WWDC 2026.

The remaining workload routes to Private Cloud Compute 2.0, Apple’s scaled cloud infrastructure for generative tasks. Apple states PCC 2.0 operates under a “Zero-Data-Retention” policy, independently verified by third-party auditors. The auditor identities and methodology have not been fully disclosed publicly, which is worth noting when evaluating that claim.

iOS 27 Feature Breakdown

Dynamic Prompts

Dynamic Prompts are predictive suggestions that adapt based on the user’s physical location and, according to pre-release leaks and analyst reports, detected cognitive load signals pulled from Apple Watch and Vision Pro biometric data.

This feature is currently reported through iOS 27 leaks rather than confirmed official specification sheets. Treat it as high-probability but unconfirmed until Apple’s public release notes.

Handoff 3.0

Handoff 3.0 extends the existing Continuity framework to carry full AI task state between devices. A complex drafting task started on iPhone can transfer to Mac with complete context intact, including intermediate AI reasoning steps.

This is particularly relevant for users already invested in Apple’s ecosystem. Our recent breakdown of M5 Max MacBook Pro local AI performance shows how Apple Silicon’s on-device ML capabilities make this kind of state-transfer computationally feasible at the hardware level.

Visual Intelligence

Visual Intelligence integrates real-time camera-based translation and object recognition directly into the core OS layer, not as a standalone app.

The distinction matters because OS-level integration means Visual Intelligence can feed data into the Action Engine, allowing Siri to act on what the camera sees without additional user steps.

Apple’s AI Partnership Strategy

Apple has confirmed a partnership with Google to integrate Gemini 1.5 Pro for specific use cases, primarily advanced creative writing and coding tasks. Apple’s proprietary models retain control over personal context and on-device memory.

This dual-model approach is a calculated hedge. Apple preserves its privacy narrative for sensitive personal data while offloading computationally intensive generative tasks to a model with broader training breadth. The tradeoff is that some tasks will route through Google’s infrastructure, which carries its own data handling implications users should understand.

Competitive Positioning: Siri vs. Galaxy AI vs. GPT-5 Mobile

| Feature | Siri (iOS 27 / Campo) | Galaxy AI (2026) | GPT-5 Mobile | Source / Confidence |

|---|---|---|---|---|

| On-device processing share | ~70% | Not disclosed | Not disclosed | Official (Apple WWDC 2026) / Estimated for competitors |

| Long-term conversational memory | Yes (local vector DB) | Limited (cloud-based) | Yes (cloud-based) | Official (Apple) / Estimated (Samsung, OpenAI) |

| Third-party app action execution | Yes (SiriKit 4.0) | Partial | Limited | Official (Apple) / Estimated (competitors) |

| Cloud data retention policy | Zero-retention (audited) | Standard retention | Standard retention | Official (Apple, third-party audit) / Official (Samsung, OpenAI ToS) |

| Gemini integration | Yes (creative/coding tasks) | Native (Samsung-Google deal) | No | Official (Apple, Google) |

| Biometric-adaptive prompts | Rumored (Watch/Vision Pro) | No | No | Leaked / Unconfirmed (Apple) |

| Developer API access | SiriKit 4.0 (full) | Partial | API available | Official (Apple) / Estimated |

Comparison Verdict

Siri with the Campo architecture leads on privacy architecture and deep OS integration. The on-device processing share of 70% is a verifiable hardware advantage, directly tied to A20 chip performance. For context on how Apple Silicon handles this kind of local inference load, our iPhone 17e specs and benchmarks coverage provides useful baseline data on A-series chip ML throughput.

Galaxy AI in 2026 remains more cloud-dependent, which gives Samsung flexibility in model updates but introduces the data handling tradeoffs that cloud processing always carries.

GPT-5 Mobile offers the strongest raw generative output in independent evaluations, but it lacks the personal context layer that Apple’s local vector database provides. It also has no hardware-accelerated on-device execution comparable to Apple Silicon.

Device Requirements and Compatibility

Apple has not published a final compatibility list as of this writing. Based on the A20 chip requirement for 70% on-device processing, the Campo architecture’s full feature set will almost certainly require iPhone models running the A20 or later.

Older devices may access a reduced feature set routed primarily through PCC 2.0, though Apple has not confirmed this tiering officially.

Who Should Care About This Update

Best overall AI assistant experience in 2026: Siri with iOS 27 on A20 hardware, specifically for users who prioritize privacy, long-term context retention, and deep cross-app automation.

Best for raw generative output: GPT-5 Mobile, if your primary use case is creative generation or complex reasoning tasks where personal context is less critical.

Best for Samsung ecosystem users: Galaxy AI 2026 remains the most integrated option for Android users, though its cloud dependency is a persistent consideration.

Who Should Prioritize This Update

Users who rely on Siri for multi-step task automation across apps will see the most immediate benefit from the Action Engine. The persistent memory feature will matter most to power users who currently re-explain context to Siri repeatedly.

Who Can Wait

Users who primarily use Siri for basic queries, timers, and media playback will not notice a significant difference in daily use. The Campo architecture’s advantages are concentrated in complex, multi-step, context-dependent workflows.

| Feature | On-Device (A20) | Private Cloud Compute 2.0 | Google Gemini 1.5 Pro Integration | Source / Confidence |

|---|---|---|---|---|

| Request share | ~70% of all requests | ~30% of requests | Scoped subset (creative/coding) | Official (WWDC 2026) |

| Process node | TSMC N3P | Apple-designed server hardware | Google infrastructure | Official (WWDC 2026) |

| Data retention | Zero (local only) | Zero-Data-Retention (audited) | Request content only, no personal context | Official (WWDC 2026) |

| Audit status | N/A | Independent auditors (identity not disclosed) | Not applicable | Official claim, partial transparency |

| Personal context access | Full | Full (encrypted, attested) | None | Official (WWDC 2026) |

| Raw TOPS / benchmark | Not officially published | Not applicable | Not applicable | Estimated / Pending official release |

| Primary use cases | Common requests, Action Engine, app interaction | Generative tasks, large-context reasoning | Creative writing, advanced coding | Official (WWDC 2026) |

Comparison Verdict: The hybrid model Apple has built is architecturally coherent. On-device processing covers the high-frequency, privacy-sensitive workload. PCC 2.0 handles the compute-intensive generative tasks with an audited privacy guarantee. Gemini 1.5 Pro fills a specific capability gap without touching personal data. The weakest point in this structure is transparency: the auditor identities for PCC 2.0 and the precise A20 benchmark figures remain unpublished, which means the privacy and performance claims are currently based on Apple’s own disclosures rather than independently verifiable data.

Apple’s Modern AI Strategy vs. The Competition

The Campo architecture does not exist in isolation. Apple is making specific, testable claims about how Siri in iOS 27 compares to competing AI systems, and those claims deserve scrutiny rather than acceptance at face value.

Siri (iOS 27) vs. Galaxy AI: Privacy as the Ultimate Feature

Samsung’s Galaxy AI, as deployed in its 2026 flagship lineup, is built primarily on cloud inference. The majority of its AI features route through Samsung’s servers and, depending on the task, through Google’s infrastructure.

Apple’s counter-positioning is explicit: the Campo architecture processes approximately 70% of requests entirely on-device, with the remaining cloud workload running through PCC 2.0’s audited Zero-Data-Retention system. Samsung has not published an equivalent on-device processing ratio, and no independent benchmark has verified Samsung’s data handling claims at the same level of architectural detail Apple provided at WWDC 2026.

The practical difference for users is meaningful. Galaxy AI’s cloud dependency means that feature availability can degrade with connectivity, and the data handling terms are governed by Samsung’s privacy policy rather than cryptographic attestation. Apple’s model, at least in principle, keeps sensitive personal context off external servers entirely.

The caveat is that “in principle” is doing real work in that sentence. Until the PCC 2.0 auditor identities and methodology are published, the privacy advantage is architecturally credible but not yet independently verified.

Benchmarking Siri Agent vs. GPT-5 Mobile Integration

OpenAI’s GPT-5 integration on mobile operates differently from Apple’s model. GPT-5 Mobile routes all requests to OpenAI’s cloud infrastructure, which means it has access to a substantially larger model than anything running on an A20 chip.

On raw generative quality for open-ended tasks, GPT-5 Mobile holds a credible advantage. No official benchmark comparing the two systems has been published, and any third-party comparison at time of writing should be treated as preliminary.

Where Siri in iOS 27 has a structural advantage is personal context. GPT-5 Mobile has no access to a user’s localized vector database, calendar, app state, or interaction history. Siri’s Action Engine can execute multi-step tasks across third-party apps without user intervention. GPT-5 Mobile, as a chat interface, cannot.

The comparison is therefore less about which system produces better prose and more about which system can actually act on your behalf inside your device. On that specific axis, the Campo architecture is ahead of any cloud-only competitor by design, not by model quality.

Hardware acceleration matters here too. The A20 chip on the TSMC N3P process is purpose-built for on-device ML inference in a way that general cloud infrastructure is not optimized to replicate locally. For latency-sensitive tasks, the on-device path is faster by definition.

The Developer Impact: SiriKit 4.0 and the Open Action Engine

The competitive story is not just about end users. SiriKit 4.0 opens the Action Engine to all App Store developers, which is a significant structural change from previous Siri integrations.

Earlier versions of SiriKit required developers to map their app’s functionality to a fixed set of intent categories. The Action Engine removes that constraint. Developers can now expose arbitrary app actions through standardized API hooks, and Siri can invoke those actions autonomously based on user context.

The implication is that the quality of Siri as an agent will compound over time as more apps integrate with SiriKit 4.0. An AI assistant that can only act within Apple’s own apps has limited utility. One that can operate across the full App Store catalog becomes substantially more capable.

Samsung and Google have both announced developer AI programs, but neither has published an equivalent architecture that combines on-device personal context with an open, standardized action layer for third-party apps. Google’s approach relies on its own app ecosystem and cloud routing. Samsung’s Galaxy AI developer tools are less documented at this stage.

The risk for Apple is adoption speed. SiriKit’s historical track record with developers has been mixed. The original SiriKit launched in 2016 and took years to see broad third-party integration. Whether SiriKit 4.0 achieves faster adoption depends on how frictionless the API integration actually is in practice, which will only become clear after the iOS 27 developer beta cycle.

Who Should Pay Attention to This Shift

For users already in the Apple ecosystem with an A20-class device, the Campo architecture represents a meaningful upgrade in what a phone assistant can actually do autonomously.

For developers, SiriKit 4.0 is worth evaluating early. The Action Engine’s open API is the most significant change to Siri’s third-party integration model since the platform launched.

For enterprise and privacy-sensitive users, the on-device processing ratio and PCC 2.0’s audited architecture make iOS 27 a more defensible choice than cloud-first competitors, with the caveat that full audit transparency is still pending.

Users on older hardware or outside the Apple ecosystem will not benefit from the Campo architecture. The on-device processing advantage is specific to A20-class chips, and the Action Engine’s utility scales with how many of your apps have integrated SiriKit 4.0 support.

Hardware Requirements: The Best Devices for the iOS 27 Campo Experience

The Campo architecture is not a software feature that runs uniformly across all Apple hardware. Its capabilities are directly tiered to the Neural Processing Unit inside your device.

The A20 chip, built on TSMC’s N3P process, is the minimum threshold for the full Campo experience. According to Apple’s official WWDC 2026 documentation, the A20 handles 70% of Siri requests entirely on-device. Older chips cannot replicate this ratio, which means older devices will route more requests to Private Cloud Compute and experience higher latency on context-sensitive tasks.

NPU Requirements and Why They Matter

The localized vector database at the core of Siri’s persistent conversational memory requires sustained, low-power matrix operations. That is precisely what a dedicated NPU handles more efficiently than a general CPU or GPU core.

Without a sufficiently capable NPU, maintaining and querying months of interaction history in real time becomes thermally and computationally impractical on a mobile device. The A20’s NPU generation is what makes the on-device memory architecture viable rather than theoretical.

Devices running A19 or earlier chips will access a reduced version of the Campo feature set. Apple has not published a formal compatibility matrix at time of writing, so the exact capability cutoffs per chip generation remain estimated based on developer beta documentation.

The MacBook Pro and Unified Memory Advantage

The Campo architecture extends beyond iPhone. Handoff 3.0, introduced in iOS 27 and its macOS counterpart, allows a complex AI drafting task to transfer from iPhone to Mac with full state awareness, including the active context window and action queue.

On the M5 Max MacBook Pro with 128GB of unified memory, this handoff has a specific performance implication. The unified memory architecture means the NPU, CPU, and GPU share the same memory pool without transfer overhead. A large generative task handed off from an A20 iPhone can continue processing on the M5 Max’s Neural Engine against a substantially larger working memory budget.

For users running memory-intensive AI workflows, the 128GB configuration is not just a storage upgrade. It determines how large a context the on-device model can hold simultaneously during a Handoff 3.0 session.

Practical Device Recommendations

For most users, an A20-class iPhone is the entry point that matters. The Campo experience was designed around that chip’s on-device processing ratio, and that is where the majority of daily interactions will occur.

For users who move complex tasks between devices, the M5 Max MacBook Pro’s unified memory architecture makes it the strongest continuation point for Handoff 3.0 workflows. The combination of a high-bandwidth NPU and a large shared memory pool is what separates it from lower-tier Mac configurations for this specific use case.

The Campo architecture is hardware-dependent by design. Apple built the privacy and performance case around on-device processing, and that case only holds when the underlying silicon can actually carry the load.

General FAQ

When will iOS 27 and the Campo architecture be available?

Apple announced iOS 27 at WWDC 2026, which ran June 8-12, 2026. Public release typically follows in September alongside new iPhone hardware, based on Apple’s established release pattern.

Developer betas are available through the Apple Developer Program immediately post-keynote. Public betas generally follow within a few weeks of the developer build.

Which devices will support the full Campo feature set?

The full Campo experience requires an A20-class chip, as confirmed by Apple’s WWDC 2026 documentation. That means the iPhone lineup shipping with the A20 is the primary target hardware.

Devices running A19 or earlier will receive iOS 27 but with a reduced Campo feature set. The exact capability cutoffs per chip generation have not been formally published by Apple at time of writing. Current estimates are based on developer beta documentation, not official compatibility tables.

Does Siri’s persistent conversational memory mean Apple is storing my data?

No, according to Apple’s stated architecture. The localized vector database that powers Siri’s long-term memory is stored on-device, not on Apple’s servers.

For tasks that do route to Private Cloud Compute 2.0, Apple has implemented a “Zero-Data-Retention” policy. This means the cloud compute nodes process the request without retaining the data afterward. Apple states this has been verified by independent auditors, though full audit transparency documentation was still pending at time of writing.

What is Private Cloud Compute 2.0, and is it different from the original?

PCC 2.0 is Apple’s second-generation confidential cloud computing infrastructure. The key claim is that even Apple’s own engineers cannot access the data being processed on those nodes.

The primary upgrade over the original PCC is scale and the addition of third-party independent auditing. The auditing component is significant because it moves the privacy claim from self-attestation toward external verification, though users should wait for the full audit report before treating it as a settled matter.

Will the Gemini integration compromise my privacy?

This is a legitimate concern worth examining carefully. Apple’s partnership with Google integrates Gemini 1.5 Pro specifically for creative writing and advanced coding tasks, according to WWDC 2026 announcements.

Apple’s proprietary models handle personal context tasks, which is where the sensitive interaction history lives. The Gemini integration is scoped to generative tasks that do not require access to your localized vector database. Whether that separation holds in practice is something developer documentation and independent testing will need to confirm over time.

Does SiriKit 4.0 mean all my apps will automatically work with the Action Engine?

No. SiriKit 4.0 opens the Action Engine API to App Store developers, but individual apps must integrate it. An app that has not updated to support SiriKit 4.0 will not be accessible to the Action Engine.

The practical utility of the Action Engine scales directly with how many of your frequently used apps adopt the new API. Early adoption will likely be uneven, with major apps integrating first and smaller utilities following over subsequent months.

Can the Action Engine interact with third-party apps without my permission?

According to Apple’s WWDC 2026 documentation, the Action Engine operates via standard API hooks. The permission model follows the existing iOS privacy framework, meaning apps must declare their Action Engine capabilities and users retain control over which apps Siri can interact with autonomously.

The specifics of the permission grant flow are still being detailed in developer beta documentation. Users who want granular control should review the privacy settings introduced in iOS 27 before enabling broad Action Engine access.

What happens to Campo features if I switch from iPhone to Android?

The Campo architecture is entirely Apple-ecosystem-specific. The localized vector database, the on-device NPU processing, Handoff 3.0, and the Action Engine are all tied to Apple hardware and the iOS/macOS software stack.

There is no cross-platform continuity. If you move to Android, your Siri interaction history and Campo context do not transfer. This is both a privacy feature and an ecosystem lock-in, depending on your perspective.

Is iOS 27 available for iPad?

Apple’s WWDC 2026 announcements covered iOS 27 and its macOS counterpart. iPadOS 27 was announced in parallel, and Campo features are expected to follow the same chip-tier requirements on iPad hardware.

iPads running M-series chips are positioned to handle the full feature set. Older iPad models with A-series chips below the A20 threshold will face the same reduced-capability situation as older iPhones.

Will older iPhones receive any Campo features at all?

Yes, partially. Apple has historically extended some software features to older hardware with reduced functionality. Devices on A19 and earlier chips are expected to receive elements of iOS 27, including some Siri improvements, but the on-device processing ratio and persistent memory architecture are not replicable without the A20’s NPU generation.

The distinction matters: receiving iOS 27 is not the same as receiving the Campo experience. Managing that expectation before upgrading is worth doing.

Final Verdict: Is iOS 27 Apple’s “iPhone Moment” for AI?

The “iPhone Moment” framing is seductive, but it deserves scrutiny. The original iPhone moment was defined by a product that worked, out of the box, for everyone who bought one. iOS 27 and the Campo architecture are not that, at least not yet.

What Apple has built is a credible, architecturally coherent AI strategy. The combination of on-device processing via the A20 chip, the localized vector database, and the Action Engine represents a genuinely differentiated approach from competitors who lean heavily on cloud inference.

The privacy angle is real, not just marketing. Independent auditing of Private Cloud Compute 2.0 moves Apple’s claims closer to verifiable fact than anything the company has offered before. That matters for enterprise adoption and for users who have been skeptical of cloud AI products.

The Gemini 1.5 Pro integration is the most strategically interesting move. Apple is effectively admitting that its proprietary models have gaps in creative and coding tasks, and it is filling those gaps with a competitor’s technology. That is pragmatic, but it introduces a dependency that Apple does not fully control.

The hardware fragmentation problem is the most honest criticism. A feature set gated behind the A20 chip means a large portion of the installed iPhone base gets a diminished experience. Apple’s AI ambitions are, in practice, an upgrade incentive as much as a software update.

Developer adoption of SiriKit 4.0 will determine whether the Action Engine becomes genuinely useful or remains a demo feature. The API is open, but the ecosystem has to build around it. That takes time, and the first year of availability will likely be uneven.

Compared to Galaxy AI’s cloud-heavy approach, Apple’s architecture prioritizes privacy and hardware integration over raw feature breadth. Compared to GPT-5 Mobile, Apple wins on personal context and local execution speed, but loses on general reasoning depth for tasks that require it.

The honest assessment: iOS 27 is the most technically ambitious version of Siri ever shipped. It is also a platform that will take 12 to 24 months to reach its stated potential, contingent on developer adoption, completed audit transparency, and real-world performance data outside controlled demos.

For users on A20-equipped devices who are already inside the Apple ecosystem, the upgrade case is strong. For everyone else, the Campo experience is a future promise more than a present reality.

- ✓ Localized Persistent Memory ensures high privacy without sacrificing context.

- ✓ Integrated Multi-Modal Action Engine allows control over 3rd party apps.

- ✓ Optimized on-device execution (70%) reduces latency and cloud costs.

- ✓ Seamless Cross-Device Handoff 3.0 maintains AI state across the ecosystem.

- ✕ High hardware requirements (A19 Pro/A20 minimum for full feature set).

- ✕ Beta limitations on ‘Action Engine’ capabilities at launch.

- ✕ Data regionality may limit features in specific markets (EU/China).